| Master software performance in just 16 hours! Join our Software Optimization for the Memory Subsystem Workshop taking place from May 18th to May 21st. Click here to express interest or register. |

For all the engineers who like to tinker with software performance, vectorization is the holy grail: if it vectorizes, this means that it runs faster. Unfortunately, many times this is not the case, and the results of forcing vectorization by any means can mean lower performance. This happens when vectorization hits the memory wall: although the CPU is using vector instructions to speed up processing, in reality, the CPU is waiting for the data to arrive from the memory. Another consequence is that the CPU is consuming more energy since using the vector units in the CPU increases power consumption.

Do you need to discuss a performance problem in your project? Or maybe you want a vectorization training for yourself or your team? Contact us

You can also subscribe to our mailing list (link top right of this page) or follow us on LinkedIn , Twitter or Mastodon and get notified as soon as new content becomes available.

Introduction

Here we give a short introduction about vector instruction, vectorization, data gathering and the memory wall. If these terms are familiar to you, you can skip this section.

What are vector instructions?

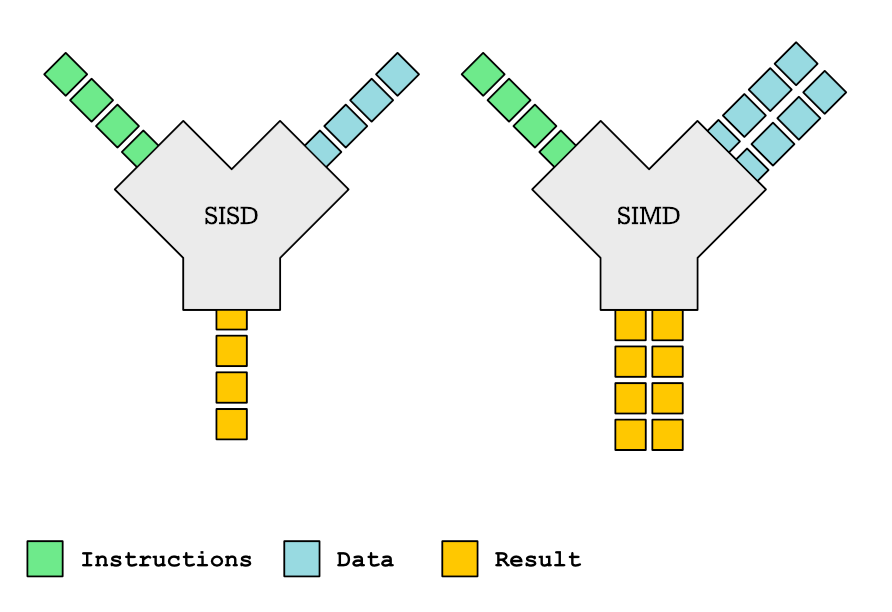

A vector instruction works with more than one piece of data. For example, a vector load will load four consecutive doubles to a special vector register which can hold four doubles. The CPU has all sorts of instructions that work on 4 double values simultaneously: it can add 4 doubles, do sqrt on four doubles, find the maximum of four doubles etc.

We talk in much more detail about vector instructions and vectorization in this post. It is a prerequisite to understanding what is going on in this post.

What are vector gather instructions?

Vector instruction set has a load instruction that can load N identical consecutive values from the memory. For example, AVX2 instruction set (which almost every X86-64 based CPU produced in the last five years has) has a vmovapd instruction. This instruction loads four consecutive doubles to a vector register that can hold four doubles. Many applications work on arrays of doubles and process the array from 0 to N with increment 1, and this instruction can be used to speed up this kind of memory access. Many other applications however need an instruction that can load from any four addresses, not only four consecutive addresses.

AVX2 instruction set introduces a collection of gather load instructions. Gather instructions (as opposed to load) can in principle load data from any address.

Sidenote: There is a corresponding store operation, that can store N pieces of data to any location. These instructions are called scatter instructions, because they can store data to any location (as opposed to storing data to four consecutive memory locations). These instructions are not commonly available on desktop systems, but they are available on servers (through AVX512 and SVE vector instruction sets).

What is the problem with vector gather instructions?

Memory is much slower than CPU, and many times CPU has to wait for the memory. For best performance, the program needs to store its data in an array, and access it sequentially from one side to another. If this is the case, the available memory bandwidth is used the most optimally.

Vectorization puts a large additional pressure on the memory subsystem because instead of loading just one value it loads N values. As we already said, the best utilization of the available bandwidth happens when the program is accessing an array sequentially. For this precise reason, many vector instruction set architectures only have load instructions and no gather instructions. Gathering data is so expensive, that in the event the program needs to gather data, it pays off to just do everything in the old-fashioned, non-vectorized way.

In this post we will present an experiment and try to evaluate the efficacy of AVX2 gather instruction. This should tell us what are the conditions when vectorization actually hurts performance because the program is hitting the memory wall.

The experiment

The experiment is quite simple. Here is the loop we use for the testing (the source code is available here):

for (int i = 0; i < len; i++) {

b[i] = a[indexes[i]];

}The addressing of array a is not sequential because we access it indirectly through array index. If we wanted to vectorize it, we need to use the vector gather instruction vgatherdpd through corresponding AVX2 intrinsic _mm256_i32gather_pd.

To observe the behavior of data gathering, we change the following parameters:

- Length of the array stored in variable

len. We start from the smallest array (1K entries) and finish with the largest array (64M entries). The sizes go like this 1K, 2K, 4K, 8K, …, 1M, 2M, 4M, …, 32M, 64M. - The array

indexeswhich holds the entries into arrayahas one of the following properties:- Either it has completely random values between 0 and

len - 1. We call this arrangement random and the corresponding memory access pattern random access pattern. Or, - It has predictable value, where the current value is calculated according to formula:

current_value = (previous_value + STRIDE) % array_length

This kind of pattern is very common, e.g. if we are iterating over an array of structs. We call this arrangement strided and the corresponding access pattern strided access pattern. We pick following values for theSTRIDE: 1, 2, 4, 8, …, 128, 256, 512, …, 4092, 8192.

- Either it has completely random values between 0 and

In total, we do 64M lookups, regardless of array size. So if the size of the array is 1K, we repeat the experiment 64K times, if the size of the array is 1M, we repeat the experiment 64 times, and if the size of the array is 64K, we do the experiment only once. We measure the cumulative runtime.

We compare the scalar version of the loop with a manually vectorized loop that uses vgatherdpd instruction to gather data. We want to see which version is faster depending on the array length and the memory access pattern.

What do we expect from the experiment?

We have the following expectations:

- The best runtime will be for the case where array

indexeshas a strided arrangement with stride 1. As the stride increase, the runtime will be worse. This is expected because smaller strides use the memory bandwidth more efficiently. - The best runtimes will be for small array lengths. Smaller arrays can fit better into available data caches. As the size of the array grows, so does the data cache miss rate increases and consequentally, the runtime gets longer.

- Random memory accesses will fare worse than strided memory accesses. With strided memory accesses, the CPU can predict the next data it needs and prefetch it. This is not possible for the random memory access pattern.

- Smaller strided accesses will fare better than large strided accesses. The reason is the block nature of the data cache: data is brough to the data cache in blocks of (most often) 64 bytes. With smaller strided accesses there is a larger chance that the data needed is in the same block and therefore already in the data cache.

- The vectorized version will be faster when the data set is small, but will become slower when the data set is large.

Do you need to discuss a performance problem in your project? Or maybe you want a vectorization training for yourself or your team? Contact us

You can also subscribe to our mailing list (link top right of this page) or follow us on LinkedIn , Twitter or Mastodon and get notified as soon as new content becomes available.

Down to numbers

Now that we have everything set up, let’s get down to numbers. The full data sheet with all the numbers is available here, bellow we give in more detail the most interesting details.

Vector vs scalar with random memory accesses depending on array length

For very small array sizes the vector version is a bit faster, but not significantly. After about the size of 512K, the scalar version becomes faster, and this difference in speed is maintained most of the time.

Even though the vector version executed significantly fewer instructions compared to the scalar version, it is nevertheless slower when the size of the array reaches a certain threshold. We say that the program has hit the memory wall: it is predominantly retrieving data from the main memory and vectorization is useless.

The CPU we used for testing (Intel(R) Core(TM) i5-10210U CPU) has 6 MB of L3 cache. We are accessing two double arrays and one integer array. This means that for an array size of 307K, everything still fits nicely in the L3 cache. With an array length of 512K, most of the data will be in the L3 data cache, although we can expect some data cache misses. With an array length of 1M, most accesses are served from the main memory and the benefits of vectorization disappear completely.

When does the vector version first becomes visibily slower than the scalar version?

| Array Length | Access Type |

|---|---|

| 1K – 512 K | Never |

| 1M – 2M | With stride 8 |

| 4M – 64M | With stride 2 |

Until the array length of 512 K, the vector version is faster or the same speed as the scalar version regardless of memory access type. When the accesses have to be made predominantly from the main memory (as opposed to the data caches), the vectorization very quickly loses its edge. We see that, when the size of the array is larger than 2M, vectorization doesn’t pay off at all, even when the stride is 2 (we are accessing every other element in the array in an easy to predict manner).

When does vectorization make this code run faster?

We plot the vector to scalar runtime ratio chart for all array lengths and different memory access patterns. If the value is larger than one, that means that the vector version is faster and we see performance improvements with vectorization. If the value is smaller than one, that means that the vector version is slower and we see performance degradation with vectorization.

Vectorization always makes sense when the memory access pattern is sequential (stride 1). If we know this at compile-time, we don’t need to use a gather instruction, we can use a regular load.

With all the other memory access patterns, vectorization quickly loses its edge once the data set doesn’t fit the LL cache1 anymore.

Conclusions

There are many conclusions to this small experiment. But we must warn that it is possible to derive many wrong conclusions. Let’s go through them.

True: If the data set mostly fits the data cache, the vectorization always pays off

In our testing environment, the fact that the data set fits the last level cache always meant that the vectorization paid off. For example, if you have a hash map or a tree whose size is smaller than the data set, your code could benefit from moving to a vectorized implementation.

Another question is if these results apply to other systems and architectures as well. I didn’t test those, unfortunately, but I can give an educated opinion. I would expect that this is true for all HPC and desktop configurations, and probably true for embedded systems as well (with a remark that embedded systems often have smaller caches, so the same program that benefits from vectorization on a desktop system might not benefit on an embedded system).

True: If the memory access pattern is sequential (stride 1), the vectorization always pays off

This holds true not only in our test, but universally. Even loops that do not do any computations, but only move pieces of data from one place to another benefit from vectorization (vectorized implementations of memcpy are always faster than their scalar counterparts).

False: When the data set doesn’t fit the data caches, vectorized code is slower

After reading this report, one could get the impression that overall the vectorized code is useless if the data set doesn’t fit the data cache. However, this is a wrong conclusion. The loop has a very low arithmetic intensity2: it basically shuffles data around memory, without doing any processing.

The benefits of vectorization become apparent only with loops with high arithmetic intensity: the higher the intensity, the higher the benefit. If the loop has enough arithmetic operations in it, it can benefit from vectorization even when needs to gather/scatter data.

False: Strided accesses are as bad as random accesses

After the data set didn’t fit the data cache, vectorized code was almost always slower than its non-vectorized counterpart, regardless of the memory access pattern. One could make a conclusion that strided memory accesses are as bad as random memory accesses with regards to vectorization.

This was the case in our tests, but I am not sure it can be generalized for all the systems. HPC systems have different memory configurations and the compilers vectorize automatically loops with that kind of memory access pattern. Does that make those loops run faster? I don’t know.

Do you need to discuss a performance problem in your project? Or maybe you want a vectorization training for yourself or your team? Contact us

You can also subscribe to our mailing list (link top right of this page) or follow us on LinkedIn , Twitter or Mastodon and get notified as soon as new content becomes available.

Overall

Overall, the conclusion is clear. For efficient vectorization, either the data set needs to fit the data cache or the accesses need to be sequential. Without it, vectorization will most likely not have effect, but again, this depends on other factors as well: hardware architecture and loop’s arithmetic intensity.

This is not memory bandwidth but rather memory latency that you are facing here. CPU can’t predict effectively where next memory access will happen. But this data is actually available and you can increase performance greatly by prefetching 20-50 indexes ahead. This will work especially well for random indexes.

Memory bandwidth is the limit for strided accesses, in contrast where memory latency is the limit for random accesses. Using software prefetching (__builtin_prefetch) didn’t help in this example, and I think the reason is the low arithmetic intensity of the test loop. If there were some useful work to do between loading and storing, then prefetching would probably make sense.

Hi Ivica!

Did you check AVX512? At least Intel claimed in the past the penalty for gather operations decreased compared to AVX2. With AVX512 I see a speed up between 1.2x and 1.8x in cases where I have 5-10 gathers per 10-20 FP operations compared to the sequential version. With AVX2 the speed up is between 0.95x and 1.2x. I wonder if its really due to the performance of gather and the larger SIMD lanes or just the larger SIMD lanes.

I didn’t check AVX512 because I didn’t have the necessary hardware. But it would be fun to measure dependency between speed, FP per loop iteration and dataset size. You can check if the reason is larger SIMD lanes or only something else by rerunning the examples from this post on your AVX512 hardware.