We use matrix multiplication example to investigate loop interchange and loop tiling as techniques to speed up your program that works with matrices.

We use matrix multiplication example to investigate loop interchange and loop tiling as techniques to speed up your program that works with matrices.

A post explaining how a few small changes in the right places can have a drastic effects on performance of an image processing algorithm named Canny.

We are exploring how class size and layout of its data members affect your program’s speed

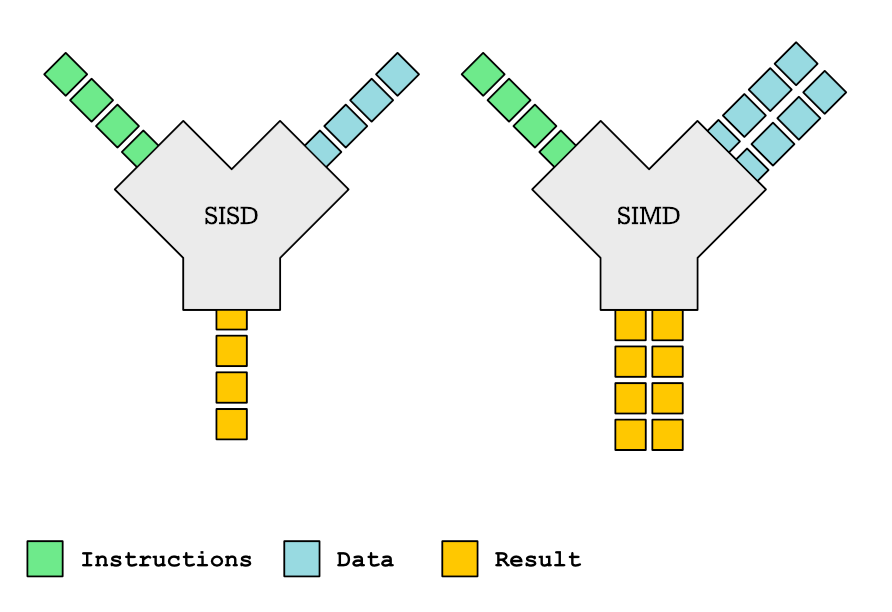

This is the first article about hardware support for parallelization. We talk about SIMD, an extension almost every processor nowadays has that lets you speed up your program.

We investigate the performance impact of multithreading.

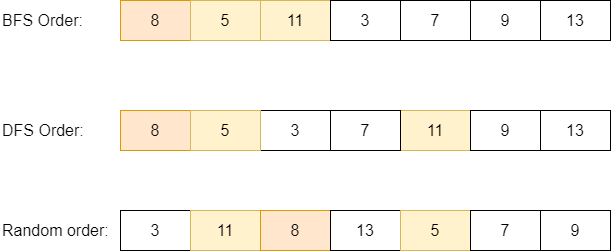

When processing (searching, inserting etc) your data structure, if you are accessing it in random-access fashion, the performance will suffer due to many data cache misses. Read on how to use the explicit data prefetching to speed up access to your data structure.

We will talk about expensive instructions in modern CPUs and how to avoid them to speed up your program.

If your program uses dynamic memory, its speed will depend on allocation time but also on memory access time. Here we investigate how memory access time depends on the memory layout of your data structure. We also investigate ways to speed up your program by laying out your data structure optimally.

In this articles we investigate on how branches influence the performance of the code and what can we do to improve the speed of our branchfull code.

CPU dispatchingh is all about making your code portable and fast. We will talk about how to make your detect features your CPU has at is disposal and select the fastest function for that particular CPU without a need to recompile your software.