| Master software performance in just 16 hours! Join our Software Optimization for the Memory Subsystem Workshop taking place from May 18th to May 21st. Click here to express interest or register. |

This is the 15th post in the series about memory subsystem optimizations. You can also explore other posts in the same series here.

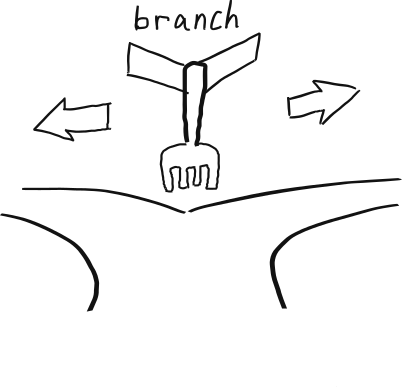

In this post we will explore how branch prediction interacts with the memory subsystem. We assume you know what branch prediction is and also understand the memory subsystem on modern processors.

Branch Prediction and Memory Subsystem

A Quick Introduction to Branch Prediction and Speculative Execution

Branch prediction circuitry is part of many modern CPUs and it is used to speed up computation when the CPU doesn’t have enough work. In essence, the CPU tries to predict the outcome of a conditional branch, before the condition of the branch is evaluated. We call this event speculation on the outcome of the branch, and the instructions executed after this branch are executed speculatively, meaning their results will get discarded if prediction turned out to be wrong. Let’s look at the example code:

for (size_t i = 0; i < n; i++) {

if (a[i] >= 0) {

positive++;

} else {

negative++;

}

}In the above example, the result of the condition if (a[i] >= 0) can be speculated, if operand a[i] is not available. Let’s assume that the CPU has predicted that the outcome of this branch will be true. It then starts to execute positive++. Later, the value of a[i] becomes available. If the branch outcome was correctly speculated, then the CPU has done some useful work and nothing is wasted. However, if the branch outcome was mispredicted, the CPU reverts the speculatively executed instructions and start executing the else branch.

Alternative to this would be to wait for the operand a[i] to become available and only then decide which instructions to execute – those in if or else branch. However, in most cases this approach would be slower, for obvious reasons.

Details of Branch Prediction

When a branch prediction is successful, this is an overall win for the performance. So, in the case of branches that are easily to predict1, there is nothing we can do to increase software efficiency or memory subsystem efficiency. But what happens when a branch is mispredicted?

When a branch is mispredicted, it means that instructions that were speculatively executed are not needed and their results should be discarded. Among them, load and store instructions, even when speculatively executed, spend memory subsystem resources. And because of misprediction, these resources were wasted.

If our goal is to decrease the pressure on the memory subsystem, then writing branchless code would be the answer. However, our principal goal is software that runs faster, not software that utilizes the least amount of memory bandwidth. And to make software faster, we need to understand how branch prediction and memory subsystem resources interact.

Interaction Between Speculation and Memory Subsystem

When analyzing the performance of branch prediction, we need to have three things in mind:

- Speculation is generally useful, because it allows the CPU to do potentially useful work. Very often, the reason why the CPU needs to speculate is because an operand hasn’t been read from the memory subsystem – speculation is a way to move forward because of the bottleneck in the memory subsystem.

- If a branch is mispredicted, there is a penalty to pay – the results of speculatively executed instructions needs to be reverted. The price depends on the CPU, but is not too high and it is typically around 10-20 cycles.

- Speculation can increase the required memory subsystem bandwidth, because some data that is fetched will not be used. On the up side, it allows some loads/stores to be issued earlier, which is very important for high-latency loads (coming from the memory or larger data caches).

The alternative to branchful code is branchless code, where both the bodies of if and else statements are evaluated, and the correct result selected based on the condition. This guarantees no branch misprediction penalty, but has some disadvantages:

- Evaluating bodies of both

ifandelsestatements is more expensive. - The CPU cannot speculate. In practice, this means some instructions will get issued later then with branchful versions.

As it is more apparent now, the question of performance and branch prediction is complex, because it is a compromise between three factors: branch misprediction penalty, load/store latency and memory subsystem bandwidth. In practice, however, the software performance in the presence of branches looks like this:

- If the data is mostly coming from fast caches, like L1 or possibly L2 caches, then branchless version will typically be faster. Loads from L1 and L2 caches have low latencies; in this case the misprediction penalty will be higher than the latency saved because loads were issued earlier.

- If the data is mostly coming from slower caches of memory, then branchful version will typically be faster. These loads have higher latencies and issuing them earlier, even when they are not needed, will have a positive effect on performance.

Do you need to discuss a performance problem in your project? Or maybe you want a vectorization training for yourself or your team? Contact us

You can also subscribe to our mailing list (link top right of this page) or follow us on LinkedIn , Twitter or Mastodon and get notified as soon as new content becomes available.

Techniques

To make software faster, you would need to select a branchless or a branchful implementation of the algorithm, as described earlier. However, implementing branchless code in C/C++ turns out to be not that simple!

When it comes the branches, the compiler is free to emit or not to emit branches according to its own heuristics. In practice, most of the time it will emit branchful code, but you would need to check this in the compiler’s assembly report to make sure. That being said, implementing branchless version can be a challenge. In this section we present two techniques on how to implement branchless algorithms.

Branchless using assembly or compiler intrinsics

The only 100% certain way to guarantee that a piece of code is written as branchless code is by writing it in assembly or compiler intrinsics. Going into details about how to write assembly is beyond the scope of this post, but for the reference, here are the two versions of binary search we used later in testing, branchful original and branchless with conditional moves.

On X86-64, if your code is working with floats or doubles, you can use compiler intrinsics that you would normally using for vectorization. Compiler intrinsics for floats and doubles exist for non-vectorized code as well, since floating-point processing on modern X86-64 processors is done in vector registers. Here is an example of branchless quicksort we used later in testing written using compiler intrinsics: original code, branchless code.

Branchless using arithmetics

You can use arithmetics to go branchless. Take the following code segment as an example:

if (a[i] > 0) {

cnt++;

}Rewriting using arithmetic takes advantage of the fact that the expression a[i] > 0 has an arithmetic value 1 if true and 0 if false. So the whole expression can be rewritten as:

cnt += (a[i] > 0);

For a more complicated example of using arithmetics to implement a branchless version of binary search, take a look at here: original, branchless using arithmetics.

Experiments

Here we present two experiments to measure how speculation and memory subsystem interact. All the experiments were executed on Intel(R) Core(TM) i5-10210U system. Each experiment was executed five times, and we report an average result. Standard deviation was small. The source code for all experiments is available here.

Branchless Binary Search

For the first experiment, we measure the performance of three versions of binary search: simple (branchful), branchless with conditional moves and branchless with arithmetics. The source code of the binary search algorithm is this:

int binary_search(int* array, int number_of_elements, int key) {

int low = 0, high = number_of_elements-1, mid;

while(low <= high) {

mid = (low + high)/2;

if(array[mid] < key)

low = mid + 1;

else if(array[mid] == key)

return mid;

else

high = mid-1;

}

return -1;

}In the branchful version, the values for low and high are speculatively calculated, even before array[mid] has been fetched from the memory subsystem. But these speculations are correct only 50% of the time, so this code suffers from branch mispredictions.

Let’s measure the performance of binary search on arrays of various sizes. In all cases we perform 4M lookups.

| Array Size (in elements) | Original | Conditional Moves | Arithmetics |

|---|---|---|---|

| 4 K | Runtime: 0.22 s Instr: 434 M CPI: 1.96 Mem. Data Volume: 0.45 GB | Runtime: 0.14 s Instr: 785 M CPI: 0.728 Mem. Data Volume: 0.25 GB | Runtime: 0.19 s Instr: 1.102 M CPI: 0.69 Mem. Data Volume: 0.32 GB |

| 16 K | Runtime: 0.26 s Instr: 511 M CPI: 2.01 Mem. Data Volume: 0.49 GB | Runtime: 0.19 s Instr: 928 M CPI: 0.77 Mem. Data Volume: 0.39 GB | Runtime: 0.24 s Instr: 1.308 M CPI: 0.72 Mem. Data Volume: 0.46 GB |

| 64 K | Runtime: 0.32 s Instr: 584 M CPI: 2.143 Mem. Data Volume: 0.48 GB | Runtime: 0.24 s Instr: 1.064 M CPI: 0.90 Mem. Data Volume: 0.25 GB | Runtime: 0.31 Instr: 1.504 CPI: 0.82 Mem. Data Volume: 0.26 GB |

| 256 K | Runtime: 0.43 s Instr: 646 M CPI: 2.59 Mem. Data Volume: 0.36 GB | Runtime: 0.39 s Instr: 1.199 M CPI: 1.28 Mem. Data Volume: 0.32 GB | Runtime: 0.47 s Instr: 1.698 M CPI: 1.09 Mem. Data Volume: 0.36 GB |

| 1 M | Runtime: 0.56 s Instr: 727 M CPI: 3.05 Mem. Data Volume: 0.67 GB | Runtime: 0.59 s Instr: 1.333 M CPI: 1.72 Mem. Data Volume: 0.59 GB | Runtime: 0.70 s Instr: 1.891 M CPI: 1.42 Mem. Data Volume: 0.68 GB |

| 4 M | Runtime: 1.127 s Instr: 798 M CPI: 4.65 Mem. Data Volume: 9.94 GB | Runtime: 1.48 s Instr: 1.467 M CPI: 3.1 Mem. Data Volume: 3.75 GB | Runtime: 1.59 s Instr: 2.084 M CPI: 2.45 Mem. Data Volume: 3.9 GB |

| 16 M | Runtime: 1.65 s Instr: 870 M CPI: 6.26 Mem. Data Volume: 18.48 GB | Runtime: 2.75 s Instr: 1.601 CPI: 4.16 Mem. Data Volume: 6.95 GB | Runtime: 2.90 s Instr: 2.277 M CPI: 3.18 Mem. Data Volume: 7.05 GB |

If you observe the table above you will notice:

- The conditional move version is faster than the branchful version until the array size 256 K elements. At the size of 1 M, the branchful version is faster.

- The conditional move version executes about two times more instructions than the branchful version.

- The arithmetic version executes executes about 2.5 times more instructions than the branchful version.

- The branchful version loads more data from the memory than the other two versions. For the largest array size, it transfers 2.5 more data then the other versions.

There is a price to branch misprediction, but there is also the price of not issuing loads soon enough. For smaller array sizes, branchless version is faster, but for larger array size, branchful version is faster.

Do you need to discuss a performance problem in your project? Or maybe you want a vectorization training for yourself or your team? Contact us

You can also subscribe to our mailing list (link top right of this page) or follow us on LinkedIn , Twitter or Mastodon and get notified as soon as new content becomes available.

Branchless Quicksort and Heapsort

For the second experiment, we pick the Quicksort sorting algorithm2. The key component of quicksort is the data partitioning. For partitioning, we pick a pivot element randomly from the array. Next, the data in the array is partitioned into two halves: the left half where all elements smaller than the pivot are and the right half with the remaining. The partitioning algorithm looks like this:

static int partition(std::vector<float>& vector, int low, int high) {

float pivot = vector[high];

int i = (low - 1);

for (int j = low; j < high; j++) {

if (vector[j] <= pivot) {

i++;

std::swap(vector[i], vector[j]);

}

}

i = i + 1;

std::swap(vector[i], vector[high]);

return i;

}The critical branch is on line 7. This branch is difficult to predict. The CPU speculatively executes data swap, which consists of two memory loads and two memory stores. The access to data is sequential, which means that the algorithm optimally uses the available memory bandwidth.

We implemented the same algorithm using compiler intrinsics to make it branchless. Here is the runtime for sorting an array of 32 M floats:

| Metric | Quicksort branchful | Quicksort branchless |

|---|---|---|

| Runtime | 2.94 s | 1.69 s |

| Instructions | 8.81 billion | 14.7 billion |

| Cycles | 11.4 billion | 6.5 billion |

| CPI | 1.3 | 0.44 |

| Memory Data Volume | 5.23 GB | 4.21 GB |

Going branchless has actually made sorting faster, although it was executing almost two times more instructions! The algorithm is accessing data sequentially, which means that the data prefetcher is able to quickly deliver the data to the CPU.

In this case, the price of speculating wrongly is high compared to latency of loads, and the branchless version is version. Branchless version also fetches less data from the memory subsystem.

Let’s contrast this with heapsort implementation. The heapsort implementation builds an in-array heap. The algorithm looks like this:

static void heapify(std::vector<float>& vec, int n, int i) {

int largest = i;

int start = 2 * i + 1;

int end = std::min(start + 2, n);

for (int j = start; j < end; j++) {

if (vec[j] > vec[largest]) {

largest = j;

}

}

if (largest != i) {

std::swap(vec[i], vec[largest]);

heapify_k(vec, n, largest);

}

}The loop on lines 7-11 is short and has at most two iterations. Then the function heapify calls itself. This algorithm creates a heap, by building in-array tree. If the current node of the tree is i, the left child is at position 2 * i + 1 and the right child is at position 2 * i + 2. Performing a search on such a tree results, at least from the hardware perspective, with a large number of random memory accesses.

Here are the runtimes of heapsort, both branchless and branchfull version on the same array of 32M floats:

| Heapsort branchful | Heapsort branchless | |

|---|---|---|

| Runtime | 12.6 s | 29.1 s |

| Instructions | 8.94 billion | 35.5 billion |

| Cycles | 41.6 billion | 73.5 billion |

| CPI | 1.06 | 2.07 |

| Memory Data Volume | 69.3 GB | 79.3 GB |

If we look at these numbers, we see the complete opposite to Quicksort algorithm. The branchful versionis two times faster than the branchless version. By its nature, heapsort performs a lot of random memory accesses. On such a large array, random memory accesses are mostly served from the memory, not the caches. They have a long latency and ideally they should be issued as soon as possible.

Like what you are reading? Follow us on LinkedIn , Twitter or Mastodon and get notified as soon as new content becomes available.

Need help with software performance? Contact us!

- Many branches are easy to predict, because they mostly evaluate to true, false, or some predictable pattern [↩]

- This measurement has already been done in the post Why is quicksort faster than heapsort? And how to make them faster? [↩]

Nice blog! could i translate into chinese version? thanks

Sure! But please leave a link to this post at the beginning of your translation.

ok! thanks!